This is part of the Beyond The Shiny New Toys series where I write about AWS reInvent 2019 announcements

Networking in AWS has always been continuously evolving to support wide range of customer use cases. Back in the early days of EC2, all customer Instances were in a flat EC2 network called EC2-Classic (there is still documentation that talks about this). 10 years ago, AWS introduced VPC which fundamentally changed the way we think about Networking and it has rapidly accelerated the adoption of public cloud.

Since the introduction VPC, the networking landscape within AWS started evolving pretty rapidly. You started getting capabilities such as VPC peering, NAT Gateways, Private endpoints for AWS services (such as S3, DynamoDB) within your VPC (so that traffic to those services do not leave your VPC). AWS also launched PrivateLink which allows your applications running in VPC securely access other applications (of yours and third party). And with their acquisition of Annapurna Labs, AWS started pushing the network capabilities even further such as 100Gbps networking, faster EBS-optimized performance, transparent hardware based encryption (almost nil performance overhead for your workloads).

New Networking Capabilities

While the AWS Networking stack continues to always improve through the year, reInvent 2019 had its fair share of announcements related to Networking. Here are some of the new features that I specifically found to be interesting.

Transit Gateway Inter Region Peering

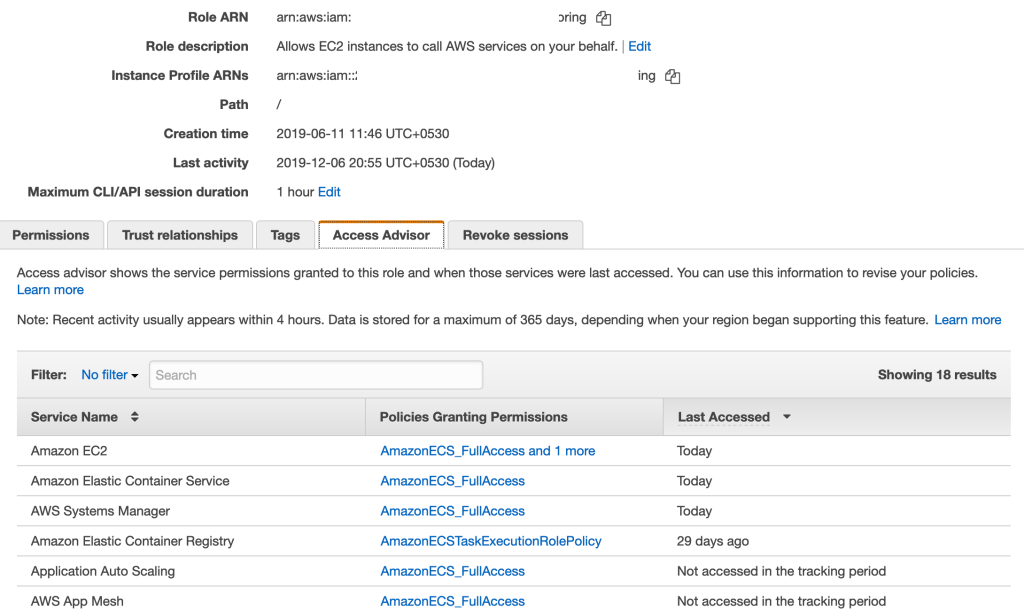

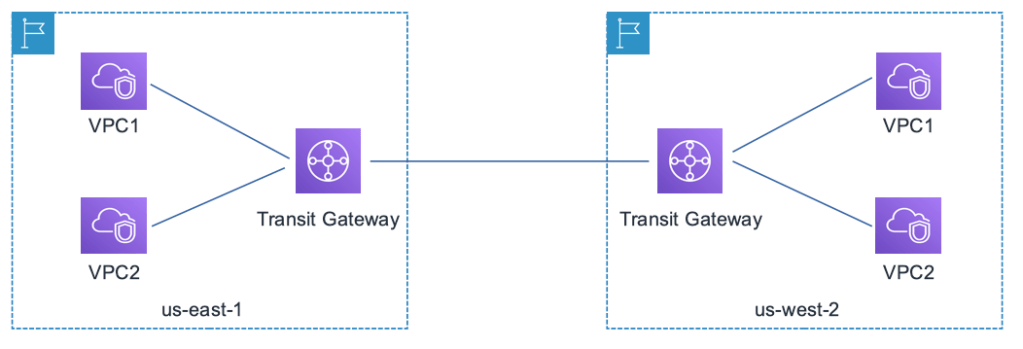

AWS Transit Gateway allows you to establish a single gateway on AWS and connect all your VPCs, your datacenter (through a Direct Connect / VPN) and office locations (VPN). You no longer have to maintain multiple peering connections between VPCs. This changed your network topology to a Hub and Spoke model as depicted below

So, if you have multiple VPCs that needs to connect to each other (and with on-premise data centers or branch offices), Transit Gateway simplifies the networking topology. However, if you are operating in multiple AWS regions (which most large customers do), and want to build a global network, then you need connectivity between the Transit Gateways.

This type of cross region connectivity between Transit Gateways was not possible earlier. Now, with Transit Gateway peering, you can easily connect multile AWS regions and build a global network.

Traffic through the peering connection is automatically encrypted and is routed over the AWS backbone. This is currently available (at the time of writing) in US-East (N.Virginia), US-East(Ohio), US-West(Oregon), EU-Ireland EU-Frankfurt regions and I would expect AWS to roll this out globally based on customer demand.

Building a Global Network

Building a Global Network that spans across multiple AWS regions connecting your data center and branch offices have become simpler with the following services/features:

- AWS Site-to-Site VPN

- The new Transit Gateway Peering

- Direct Connect Gateway (requires a special interface called Transit Virtual Interface with 1Gbps and above subscription. For sub 1Gbps connections with Transit Gateway, check out this solution).

There were also quite a few other features announced around reInvent 2019 that ups the game when it comes to building a scalable and reliable Global Network. Few notable mentions:

- Accelerated Site-to-Site VPN: This uses the AWS Global Accelerator to route traffic through AWS edge locations and the AWS Global Backbone. Improves VPN performance from branch offices

- Multicast workloads: Transit Gateway now supports multicast traffic between the attached VPCs. A feature asked by many large enterprises for running clusters and media workloads

- Visualizing your Global Network: If you are building out such a complex Global Network, you would need a simpler way to visualize your network and take actions. The new Transit Gateway Network Manager allows you to centrally monitor your Global Network

If you would like to understand more about various architecture patterns, check out this deep dive talk by Nick Mathews at re:Invent 2019.

Application Load Balancer – Weighted Load Balancing

When you are rolling out a new version of your app, you need some kind of strategy to transition users from the older version to the new version. Depending upon your needs, you employ techniques such as Rolling Deployments, Blue/Green Deployments or Canary Deployments.

All of these techniques require some component in your infrastructure to selectively route traffic between the different versions. There are few ways in which you can do these in AWS today:

- Using Route 53 DNS routing policy. For example, you can use Weighted Routing policy to control how much traffic is routed to each resource (having two different ALBs/ELBs running different versions of your app)

- You could have different AutoScalingGroups attached to the same ALB and adjust the ASGs to shape your traffic

With this new “Weighted Load Balancing” feature in ALB, you can achieve the same with one ALB.

- You create multiple Target Groups with each having EC2 Instances running different versions of your app

- Under the “Listeners” of your ALB, you add the “Target Groups” and assign different weights to different Target Groups

Once you do the above, ALB would split the incoming requests based on the weights and route appropriate requests to each of the Target Groups.

Migration Use Cases

One other great use case for this feature is migration. Target Groups are not limited to just EC2 Instances. Target Groups can be EC2 Instances, Lambda functions, containers running ECS/EKS or even just IP addresses (say you have a VM in a data center). So, you can use this capability to even migrate from one environment to the other by gradually shifting traffic.

If you would like to learn more about this new feature, check out this blog.

Application Load Balancer – LOR Algorithm

The default algorithm that an Application Load Balancer (ALB) uses to distribute traffic is Round Robin. This fundamentally assumes that the processing time for all types of requests of your app is the same. While this may be true for most consumer facing apps, a lot of business apps have varying processing times for different functionalities. This would lead to some of the instances getting over utilized and some under utilized.

ALB now supports Least Outstanding Requests (LOR) algorithm. If you configure your ALB with LOR algorithm, ALB monitors the number of outstanding request of each target and sends the request to the one with the least number of outstanding requests. If you have some targets (such as EC2 Instances) that are currently processing some long requests, they will not be burdened with more requests.

This is available to all existing and new ALBs across all regions. A great feature!! There are some caveats too (such as how this would work with Sticky Sessions). Check out this documentation for more details.

Better EBS Performance

These are the things that I love about being on public cloud. Any EBS intensive workload, automatically gets better network throughput. At no additional cost. Without me doing anything. Just like that!!

Well those are the announcements that I found interesting in the Networking domain. Did I miss anything? Let me know in the comments.